OpenAI has officially expanded the availability of its Advanced Voice Mode to web browsers, allowing users to engage in real-time, natural conversations directly through desktop interfaces. Previously limited to mobile applications, this rollout provides a more integrated experience for creators and businesses that rely on desktop environments for their primary production workflows.

The update focuses on delivering lower latency and more expressive vocal inflections, making the interaction between humans and artificial intelligence feel more intuitive and fluid.

While the mobile version of this technology has been noted for its ability to respond to visual cues, the current web implementation focuses exclusively on the audio and text experience.

According to reports from MediaPost, the web rollout does not yet include the live video capabilities that allow the model to see and respond to a user's surroundings through a camera. Despite this omission, the transition to the web represents a significant step in making sophisticated audio tools more accessible to a wider range of professional users.

Enhancing Desktop Workflow Integration

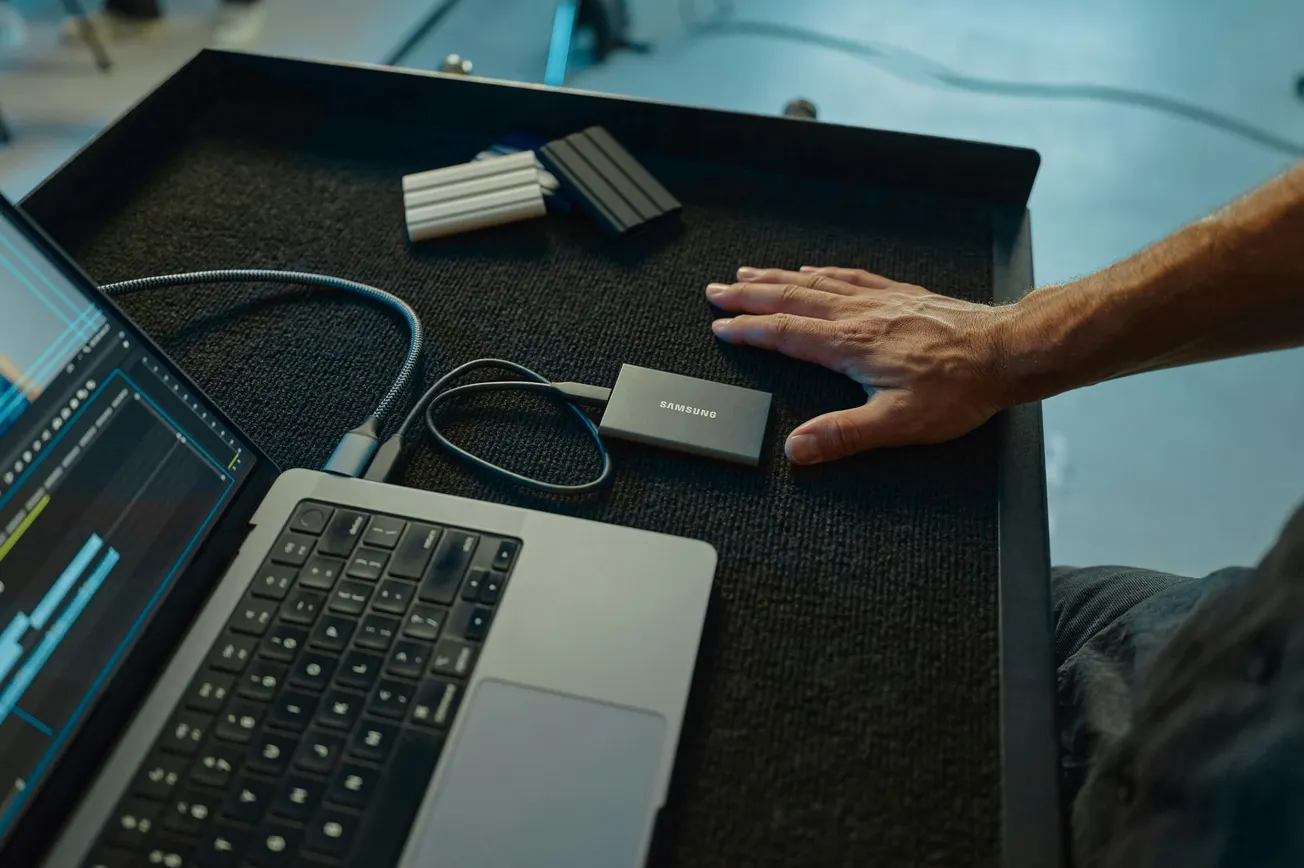

The move to bring Advanced Voice Mode to the browser addresses a common friction point for professionals who spend the majority of their workday on computers rather than mobile devices. For educators, researchers, and content creators, the ability to converse with an AI model while simultaneously managing documents or editing software can significantly improve efficiency.

This hands-free interaction allows users to brainstorm ideas, practice scripts, or troubleshoot technical issues without constantly switching between devices.

In many business environments, the desktop remains the central hub for high-stakes communication and content production. By integrating advanced audio software capabilities into the browser, OpenAI is positioning its tools to be part of the standard professional toolkit. This accessibility ensures that even those without the latest mobile hardware can benefit from the emotional intelligence and conversational depth that the new voice model offers.

Applications for Creators and Educators

The expressive nature of Advanced Voice Mode provides unique advantages for those in the podcasting and video production space. Creators can use the tool to simulate interviews, practice vocal pacing, or receive instant feedback on narrative structures. Because the model can understand and mimic nuances in tone and emotion, it serves as a sophisticated sounding board for developing audio-first content.

In educational settings, the tool can be used for language practice or complex subject tutoring where verbal explanation is more effective than reading text. The natural flow of the conversation reduces the cognitive load typically associated with traditional AI interfaces, which often require specific prompt engineering and rigid input. As more users adopt these tools, the barrier to entry for producing high-quality audio scripts and educational modules continues to lower.

The Omission of Video Features

One of the most discussed aspects of this update is the lack of computer vision integration on the web platform. In mobile versions, the AI can use the device camera to identify objects, read physical documents, or provide feedback on visual demonstrations. The absence of this feature on the web suggests that OpenAI is prioritizing audio stability and low latency for the initial desktop launch.

For businesses that require visual collaboration, such as those demonstrating products or reviewing video equipment setups, the mobile app remains the primary choice. However, for the majority of administrative, creative, and communicative tasks, the audio-centric web version provides a robust solution. It is expected that as the technology matures, further integration of visual data will bridge the gap between the mobile and desktop experiences.

Future Implications for Media Production

The rollout of Advanced Voice Mode signals a broader shift in how media professionals interact with technology. As voice-driven interfaces become more capable, the traditional reliance on keyboards and mice may diminish for certain creative processes.

This evolution aligns with the industry goal of reducing technical friction, allowing storytellers to focus more on the narrative and less on the mechanics of the software.

As businesses continue to integrate these tools into their daily operations, the focus will likely shift toward privacy and security. Ensuring that conversational data is handled responsibly remains a priority for organizations adopting AI for internal communications or brand storytelling.

For now, the expansion of these tools to the web provides a powerful new way for human creators to leverage machine intelligence in their pursuit of better stories.