Overcoming Hardware Restrictions in AI Development

The rapid expansion of artificial intelligence has introduced a significant infrastructure challenge for creators and businesses: hardware dependency. Most AI-driven tools for video and audio production are currently optimized for a narrow range of hardware, creating a bottleneck that can drive up costs and limit scalability. Gimlet Labs recently secured $80 million in Series A funding to address this issue by developing technology that allows AI models to function across multiple chip architectures without requiring extensive code modifications.

This development is particularly relevant as the demand for high-performance computing continues to outpace the availability of specific industry-standard processors. For organizations relying on AI for tasks such as automated video editing, noise suppression, or synthetic voice generation, the ability to switch between different hardware providers offers a layer of operational flexibility that was previously unavailable.

Addressing the Problem of Vendor Lock-In

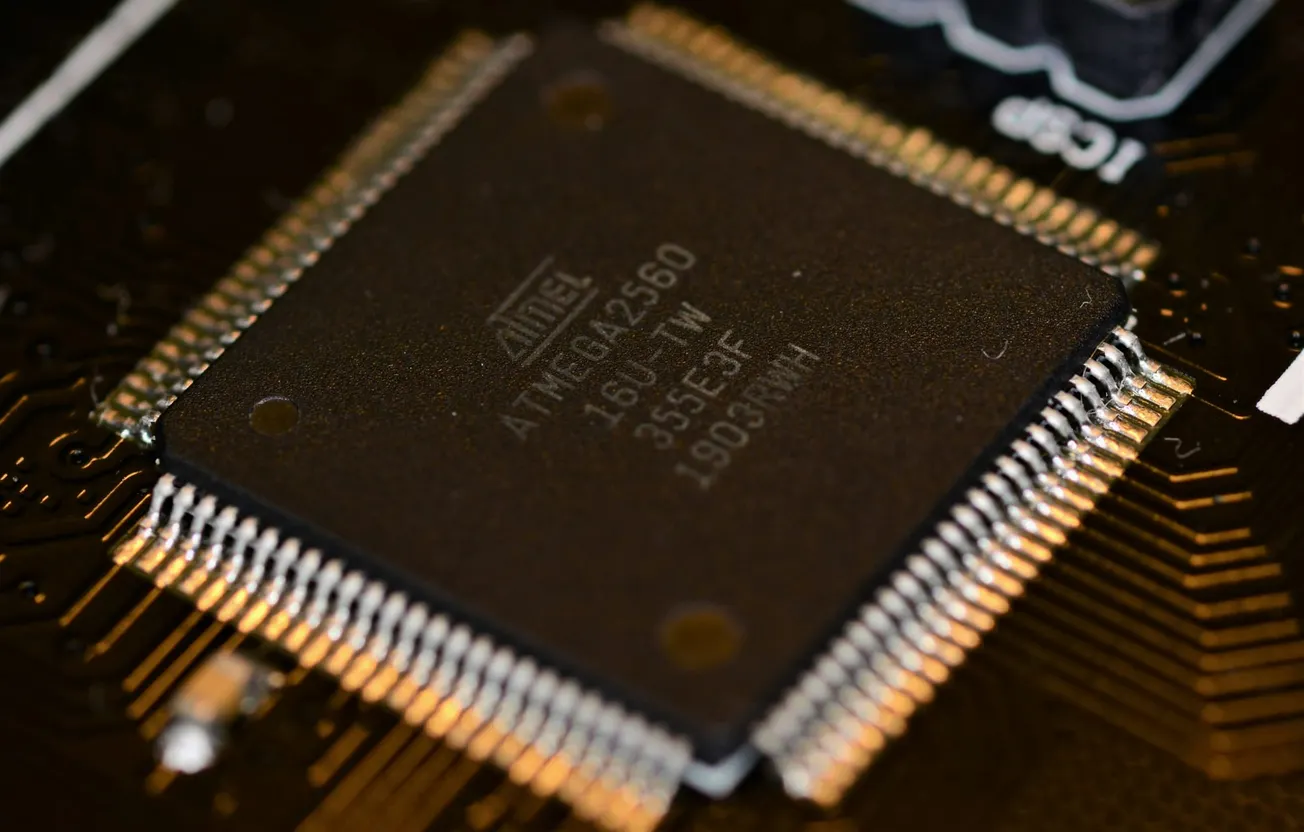

A primary hurdle in the current media technology landscape is vendor lock-in, where software is tied to specific hardware ecosystems like Nvidia's CUDA platform. According to reports from The Tech Buzz, Gimlet Labs is building an abstraction layer that enables AI workloads to run on chips from AMD, Intel, ARM, and specialized manufacturers like Cerebras and d-Matrix.

By removing the need for developers to write hardware-specific code, this technology functions as a bridge between the software and the silicon. For content teams, this means that the AI tools used for rendering high-resolution video or processing complex audio layers can be deployed on whatever hardware is most cost-effective or available at the time. This flexibility is essential for maintaining production schedules when certain components are in short supply.

Impact on Production Costs and Efficiency

Inference—the process of actually running an AI model to perform a task—accounts for a substantial portion of the total cost of maintaining AI-integrated workflows. When businesses are forced to use premium hardware due to compatibility issues, these costs can become prohibitive. The approach taken by Gimlet Labs allows for dynamic routing of workloads to the most efficient available processor, which can result in significant cost savings.

Lowering the technical and financial barriers to AI deployment supports the broader mission of making professional-grade production tools more accessible. When hardware is no longer a restrictive factor, smaller studios and independent creators can leverage the same advanced automation features as larger enterprises. This democratization of technology ensures that the quality of the final output is determined by the creator's vision rather than the depth of their technical budget.

Future Implications for Media Workflows

As the AI infrastructure market continues to evolve, the shift toward heterogeneous computing—using a variety of different processor types together—is expected to become the standard. The success of initiatives like those from Gimlet Labs suggests a future where software remains portable and adaptable.

For the video and audio industry, this means that the next generation of editing suites and recording platforms will be more resilient. Creators can expect more consistent performance across different devices, from high-end workstations to mobile setups. By focusing on interoperability, the industry is moving toward a model that prioritizes the user experience and the creative process over the underlying technical constraints.

Staying informed about these infrastructure shifts allows businesses to make better long-term investments in their content production stacks. As hardware compatibility becomes less of a friction point, the focus can return to what matters most: telling compelling stories through high-quality audio and video.